Ogham MCP ᚛ᚑᚌᚆᚐᚋ᚜

Pronounced "OH-um" in modern Irish

Your AI keeps forgetting what you told it yesterday. Ogham fixes that. Save a memory in Claude Desktop, pick it up in Cursor, Claude Code, or Codex CLI.

One PostgreSQL database, every client.

Open source, MIT licensed.

Hybrid Search

Vector similarity + keyword matching, merged with Reciprocal Rank Fusion. Find memories by meaning or exact terms, in a single SQL query.

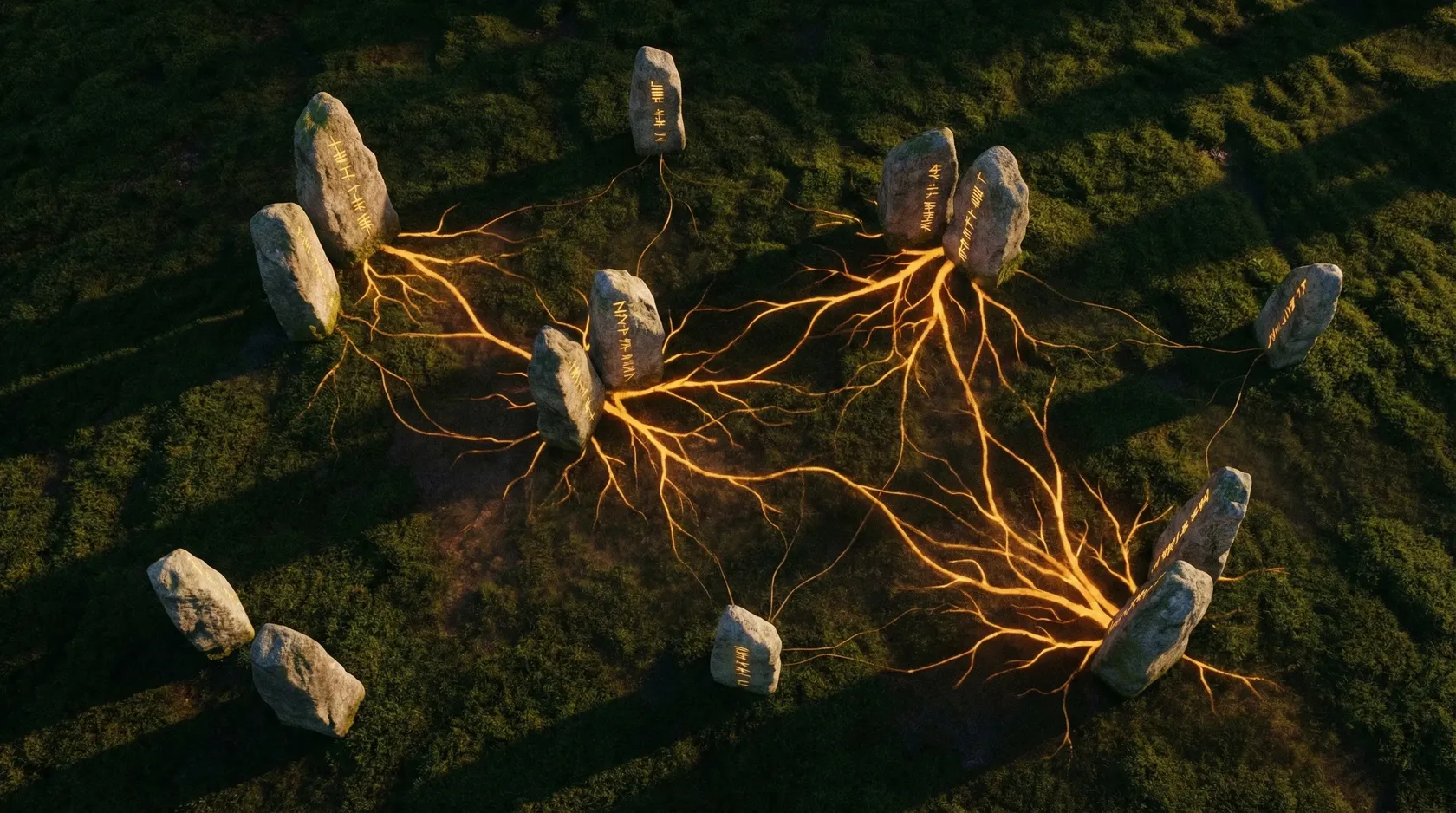

Knowledge Graph

Memories auto-link by embedding similarity. Traverse relationships with recursive CTEs. No graph database, no LLM in the write path.

Cognitive Scoring

ACT-R inspired ranking boosts frequently accessed, recently used, and well-connected memories. Bayesian confidence lets agents verify or dispute facts. All computed in SQL.

Profile Isolation

Partition memories by context — work, personal, per-project. Switch profiles instantly. Inspired by Severance: what one profile knows, the others don't.

Cross-Client Memory

Store a memory in Claude Desktop, recall it from Cursor or Claude Code. Every client hits the same PostgreSQL database. No sync, no export, no intermediary.

Private by Design

Memories live in your own PostgreSQL instance -- Supabase, Neon, or a box under your desk. No cloud service sits between you and your data. Run Ollama for fully local embeddings, or use OpenAI, Mistral, or Voyage AI.

How it works #

(Supabase · Neon · self-hosted)"] Tools --> EMB["Embedding Provider

(OpenAI · Mistral · Voyage · Ollama)"]

When you store a memory, Ogham generates a 512-dimensional embedding with your configured provider, saves the text and vector to PostgreSQL, and links it to similar memories already in the database.

Searching uses hybrid retrieval: vector similarity and keyword matching run together. A search for us-east-1 finds the exact match via full-text search, while “which AWS region do we use” finds it via semantic understanding. Both happen in the same query.

Results get re-ranked by four signals:

- Fused semantic + keyword ranking via RRF

- An ACT-R formula that weights how often and how recently each memory was accessed

- A Bayesian confidence score that agents can raise (verified) or lower (disputed)

- A graph centrality boost — memories with more relationship edges rank higher

Memories you use often stay sharp. Memories linked to others outrank identical but isolated ones. Rarely accessed ones fade. Disputed ones drop in ranking without being deleted.

Try it #

# In any AI client with Ogham connected:

> "Remember that our Azure resource groups use {env}-{service}"

# Later, in the same client or a different one:

> "What do you know about our Azure resource naming?"

It works across clients because they all hit the same PostgreSQL database.